So you have a handful of brand new ESXi servers, and want VMs to automagically move here and there based on host availability and resource usage; vCenter have you covered with the DRS and HA but obviously you need to put all the hosts into a cluster for these thing to work. What you might not know is that there are 3 ways of creating a cluster which differs in certain things, and you will regret it if you choose the wrong one. Trust me, I learned it the hard way.

Note: we are using ESXi 7.0 and vCenter 7.0 here.

Prerequisites

Read through the article before starting doing anything. There are a lot decisions need to be made that influence the steps before it, so don’t try doing it in one pass. If you fuck up, delete the cluster and start again.

After ESXi 6.7U1, if you want to move a host into a cluster, it must be in the maintenance mode. This require powering off or migrating away all the running VMs. So if you don’t want a service disruption, your running VMs must survive even if you shutdown the beefiest one of your hosts.

If you need HA (HA rebuilds your VM if the host died), you will need at least 2 network mountable storages. They can be NFS exports, or VMFSes on iSCSI block devices, or a mix of the two. It’s recommended that the 2 storages are on different hosts and if you use iSCSI you should set up multipath iSCSI.

Networking Considerations

If your hosts have exactly the same NICs then you can consider using LACP (LAG) between the hosts and the switch; otherwise I recommend you to use plain old port load balancing built in the distributed switch.

Creating a Cluster

Creating a cluster involves 3 easy steps:

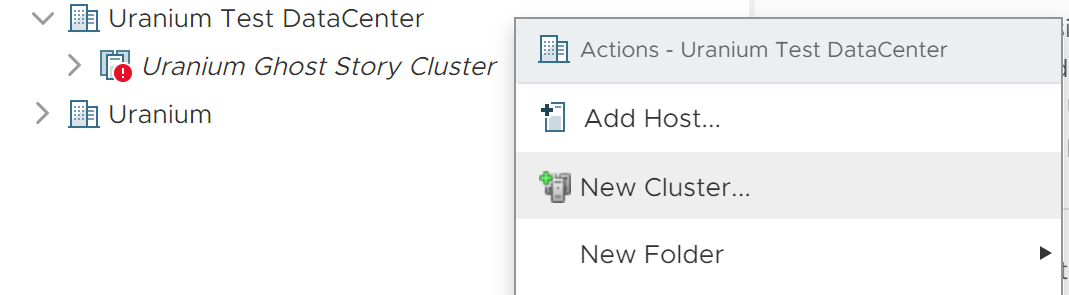

1. You right-click on the datacenter

2. Click on the “New Cluster…”

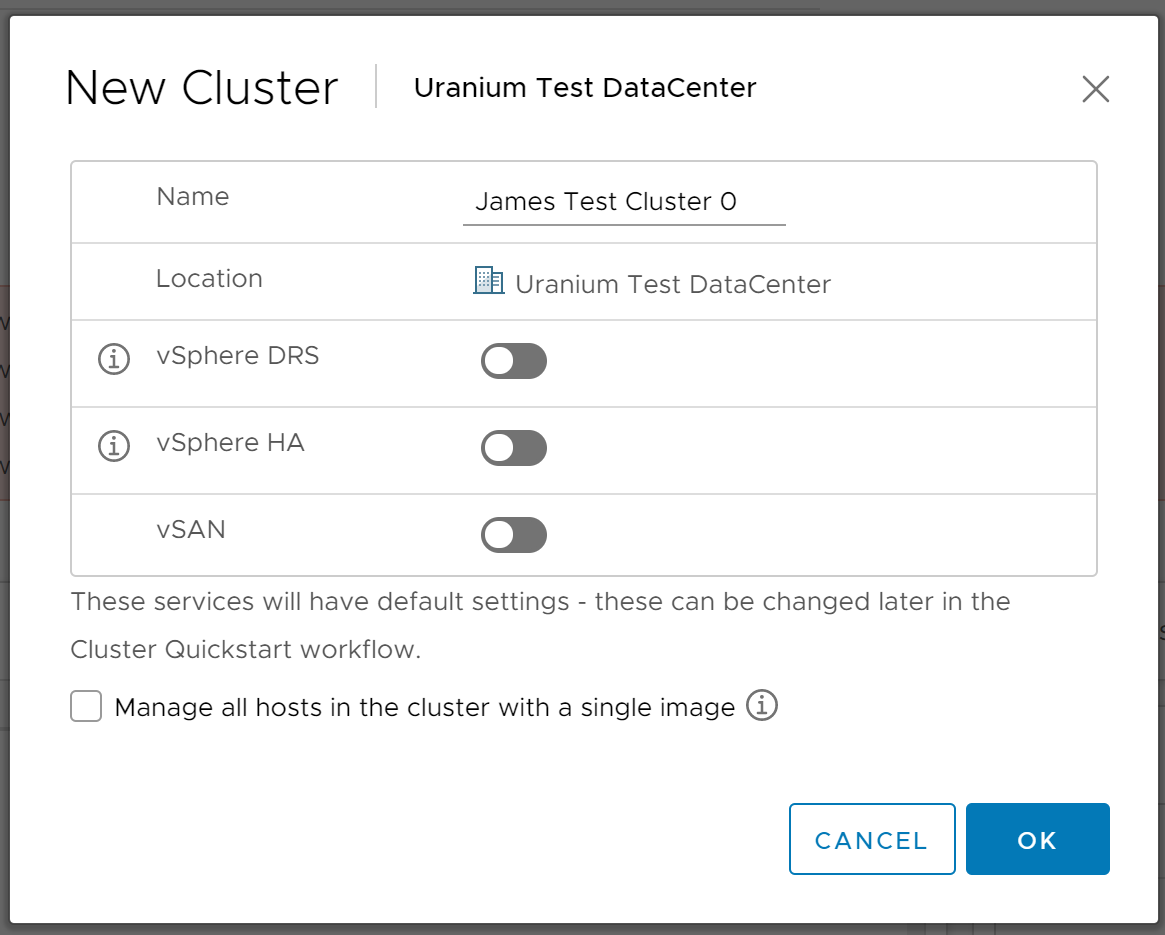

3. Click the big blue “OK” button.

There are really nothing to note here; DRS, HA and vSAN can be enabled later so there is no harm not configuring it now. (In fact, I encourage you to not configure it now. ) If all the hosts are and will be of the same model and configuration, then you probably want to use the “single image” option; you cannot change it later so make wise choice now.

Adding Hosts to the Cluster

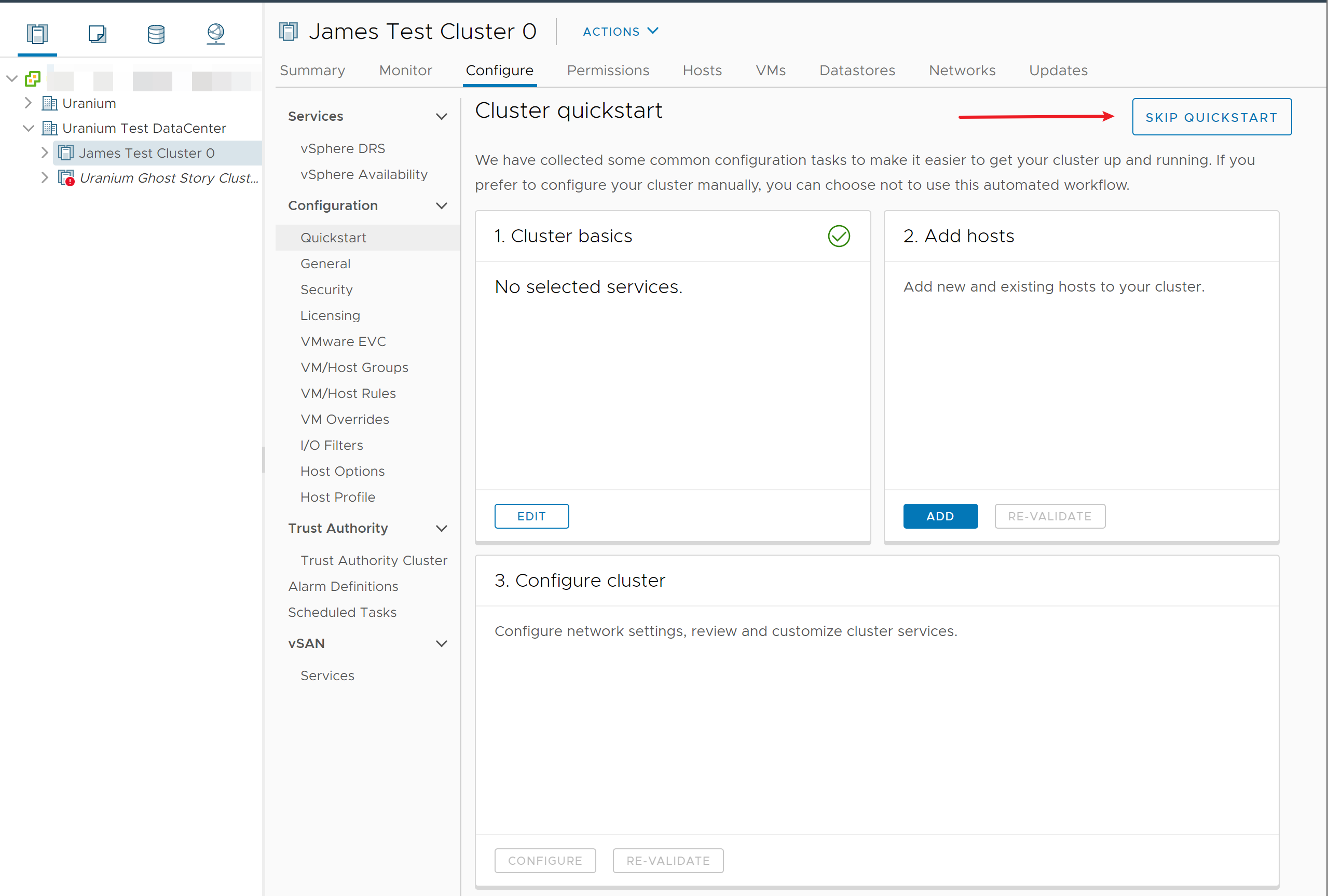

After creating your cluster, you go to the cluster quickstart page to add the hosts. No need to add the hosts to vCenter before that, although this won’t hurt much. But I don’t recommend you drag the hosts into the cluster in the left tree panel; in my experience this would easily create a desync. Put the hosts you are going to add to the cluster into the maintenance mode (you need to shut down all the VMs first).

Here is your first option: the “Skip Quickstart” button denoted by the red arrow.

This button is for the brave ones. Pressing this button means opting-out the quickstart forever for this cluster and you need to manually set up everything. And there are no official documentation on how to manually set up everything. Good luck and godspeed.

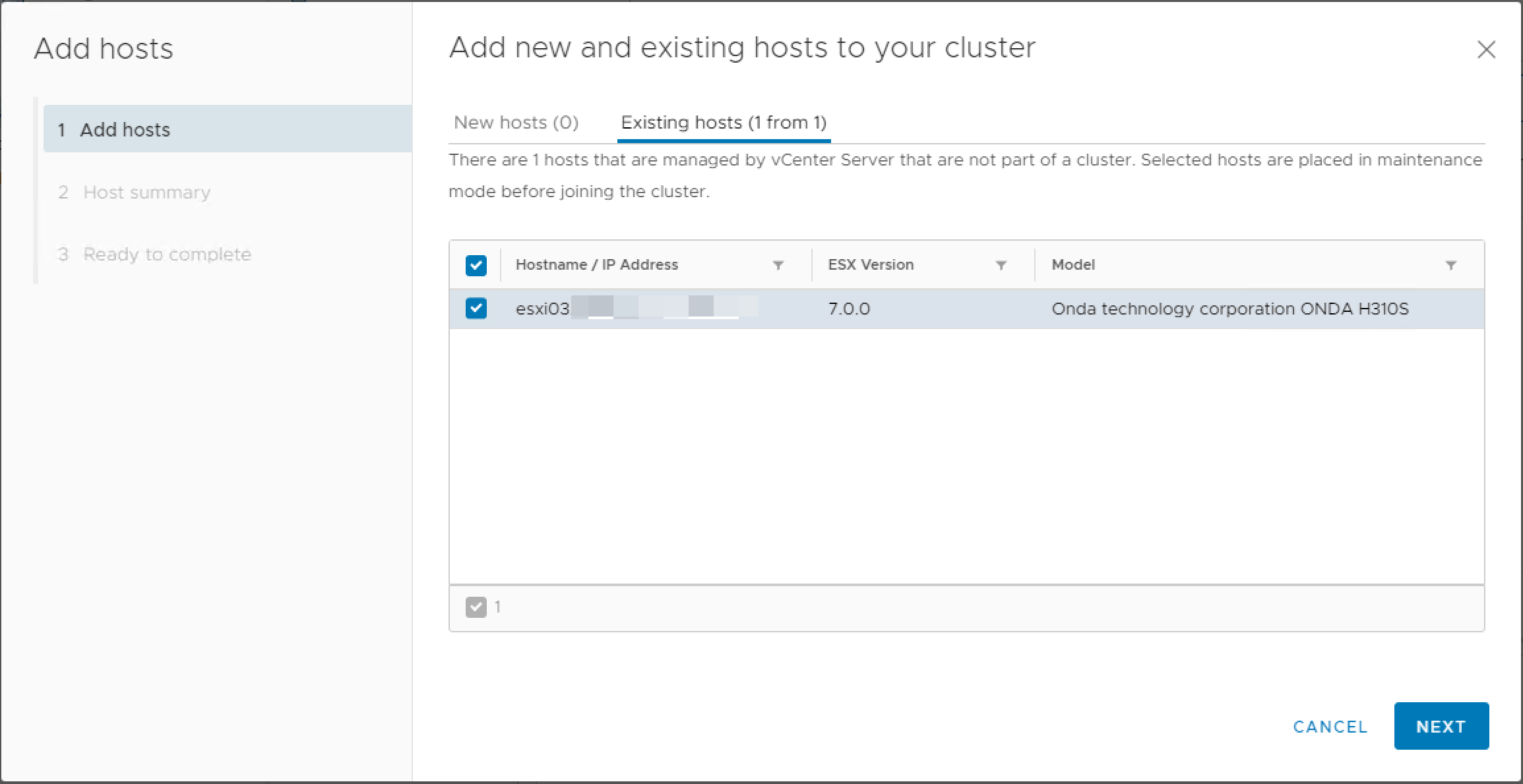

The “Cluster basics” allows you to enable DRS and HA, and I still don’t recommend enabling it now. Now you only need to add the hosts using the blue “Add” button, which brings you to a 3-step wizard where only the first step is actually useful:

(And you may notice the image quality went down a bit since I’m using another vCenter which happens to have a host free for me to do the screenshots. Sorry.)

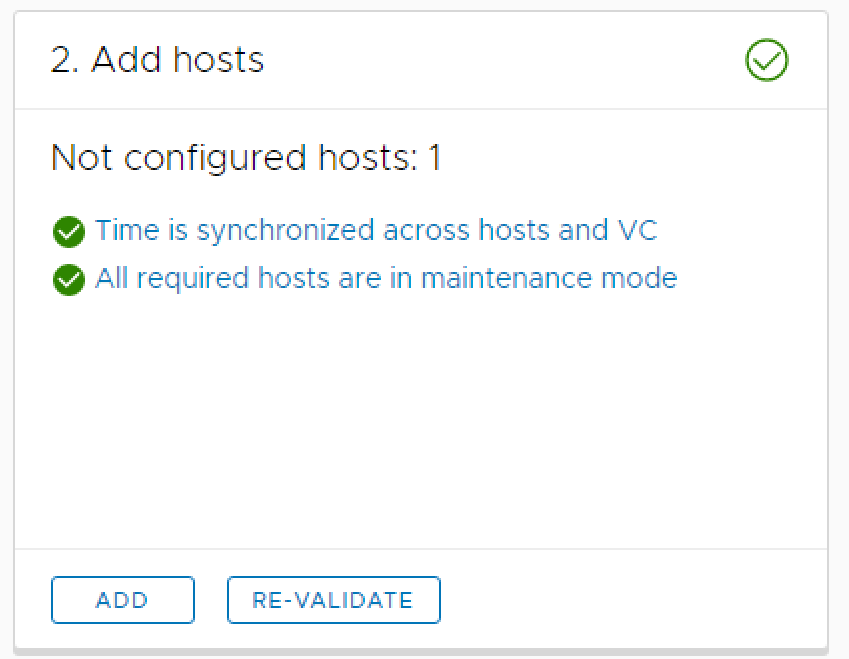

After adding your hosts, there will be some checks running and you will see:

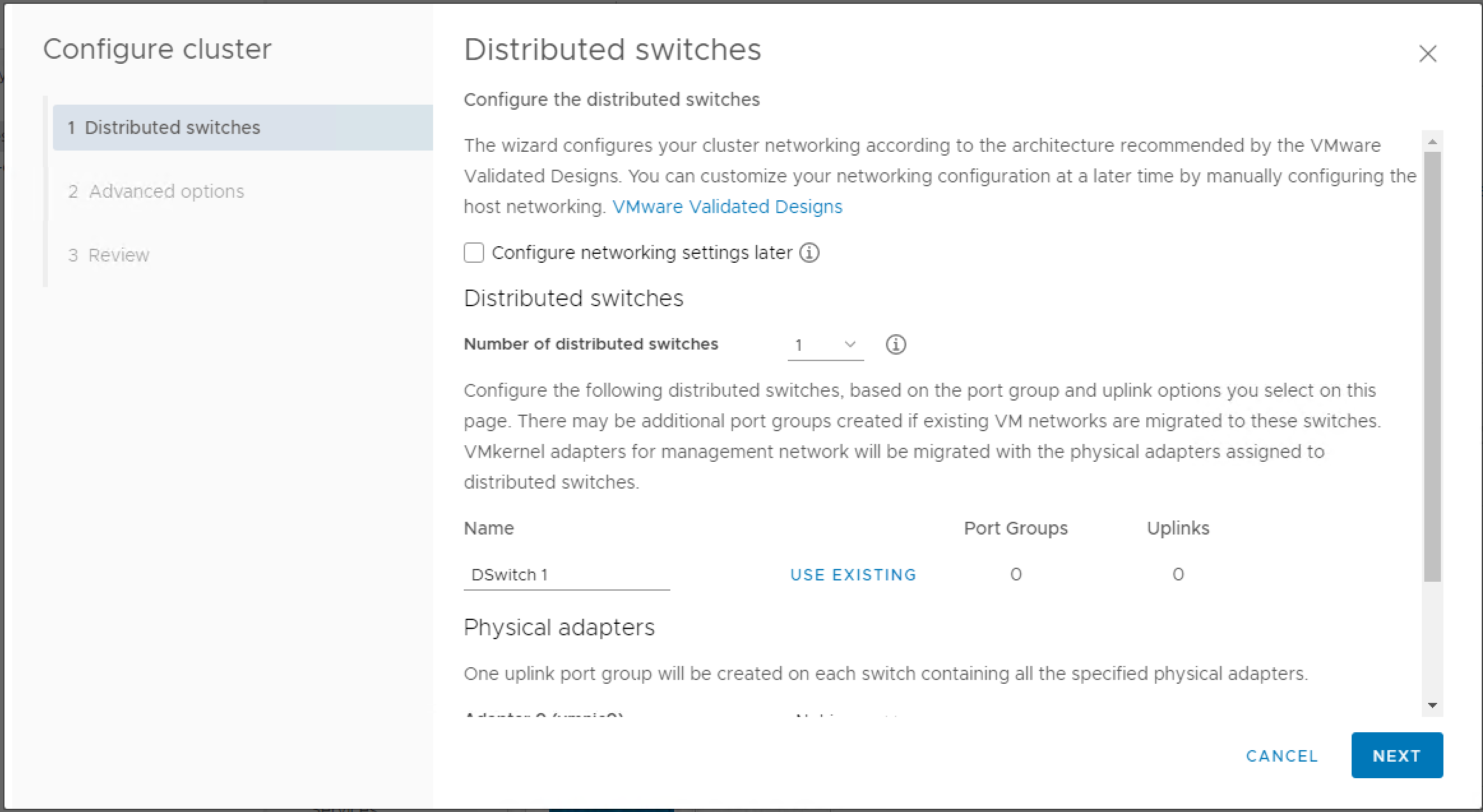

There are different checks for different cluster states, but everything should be A-OK for a new cluster. (If some checks failed, then you are in trouble.) Now you might have noticed that the “Configure” button on the step 3 is now available. Click it and meet our second life choice today:

Here you need to choose if you want the quickstart wizard to manage the distributed switch (and all the host networking options) for you. If your hosts’ NICs are exactly the same and they are wired equally then you can safely use this option and enjoy your life. But if your hosts have different numbers of NICs and have a mixture of 1G/10G/40G ports, I’d recommend ticking the “Configure networking settings later”. You will not be able to change this later. See the next section for what to do here.

Other options in this wizard are all pretty easy to understand. I recommend enabling EVC down to the level of what your worst CPU support since it will make DRC happier.

Cluster Networking Setup

First I need to clearify some things:

- You can put multiple NICs connected to a single switch into a vSwitch and it will not create loops by default

- A NIC can only belong to one vSwitch at a time, so if you want to have network on the traditional vSwitch and distributed switch at the same time, you need to have multiple NICs

- But don’t be worry if you want to use distributed switch and have only one NIC on the host; there is option to do the migration seamlessly

- The host will try to rollback if you send it some bad network config causing it to disconnect from the vCenter; but the rollback will not always succeed

- If you have SFP/SFP+ ports, I recommend using “Beacon probing” as the network failure detection method because the link status are not reliable in my experience (this config must be changed per port group)

- vMotion VMKernel cannot use link-local IPv6 addresses, you must assign them some IP other than fe80::/64

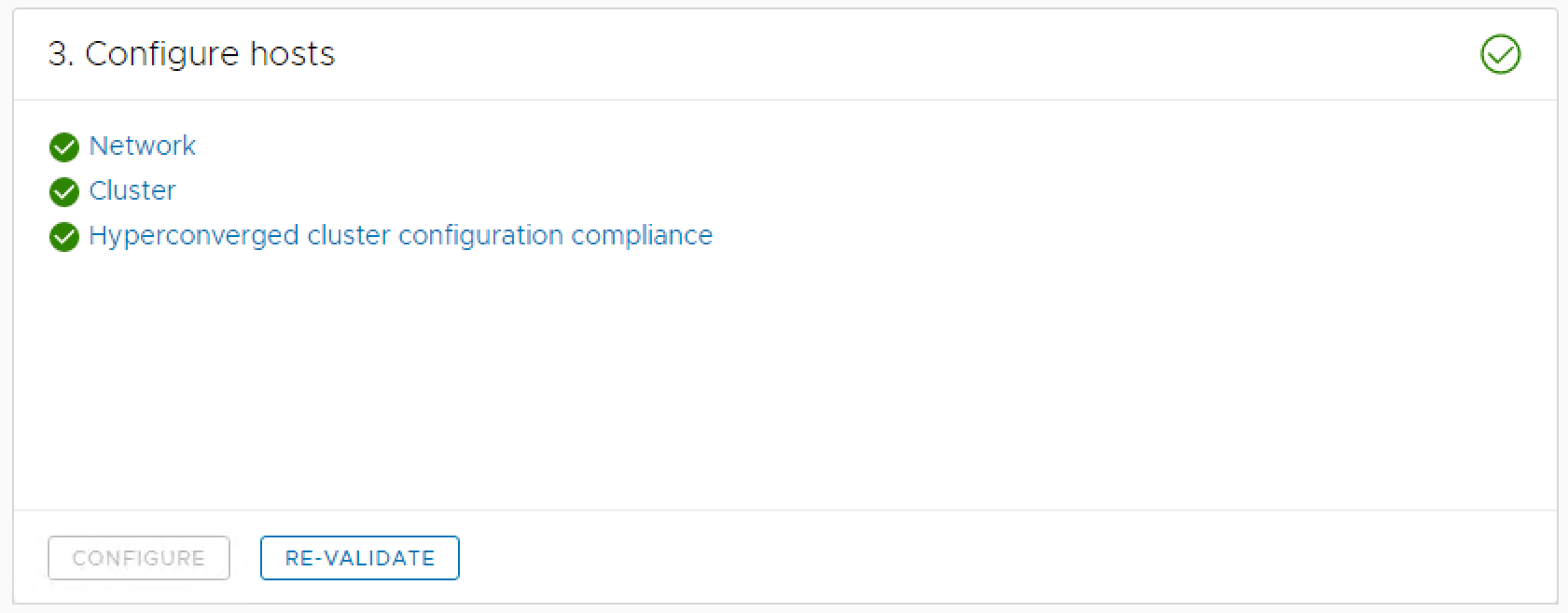

After finishing these steps, you should be getting all green on the “Configure hosts” step.

Networking with the Quickstart Wizard

Great, so you have an ideal cluster hardware and want to proceed with the quickstart wizard. Now you just need to create one distributed switch, choose a good name, and assign some of your NICs to the uplink portgroup of your new distributed switch. The default VMKernel virtual NICs (vmk0 and vmk1) will be created and automatically migrated to the distributed switch; you might need to manually migrate your VMs to the distributed switch later. Also you need to assign 2 IPs per host.

DON’T EVER try to remove the portgroups for management traffic and vMotion traffic created by the quickstart wizard, or you will fuck up and have to tear down the cluster and start over. You have been warned.

Networking with Your Own

You need to achieve the following objects:

- All hosts’ management VMKernel (usually vmk0) have IP address and can connect to the vCenter

- All hosts’ vMotion VMKernel (usually vmk1, but you can use vmk0 too) have IP address and con connect to each other

- You can connect to the vCenter and all the hosts’ vmk0

- You might want to provide internet access to the vCenter so it can check updates

There are no limitations on how to achieve that, but I’ll share what I do:

- Create a distributed switch

- Assign all the hosts in the cluster to the distributed switch (you can assign hosts outside the cluster too; it doesn’t matter much)

- If you don’t have dedicated VLAN for vMotion traffic: Create 1 port group for both management traffic and vMotion traffic

- If you have dedicated VLAN for vMotion traffic: Create 2 port groups for management traffic (vmk0) and vMotion traffic (vmk1)

- Optionally create port groups for your VMs (they can be in the same VLAN or not)

- Assign some uplinks to the distributed switch, and migrate vmk0 and vmk1 to the corresponding port groups (use the “Add and Manage Hosts” wizard in the distributed switch’s content menu)

- Assign IPs to all the vmk0’s and vmk1’s (vmk0’s should be able to reach vCenter and vmk1’s should be able to reach each other)

- Migrate the VMs to the new port groups (you might do it in step 4 or do it manually now)

- Remove the old vSwitch port groups

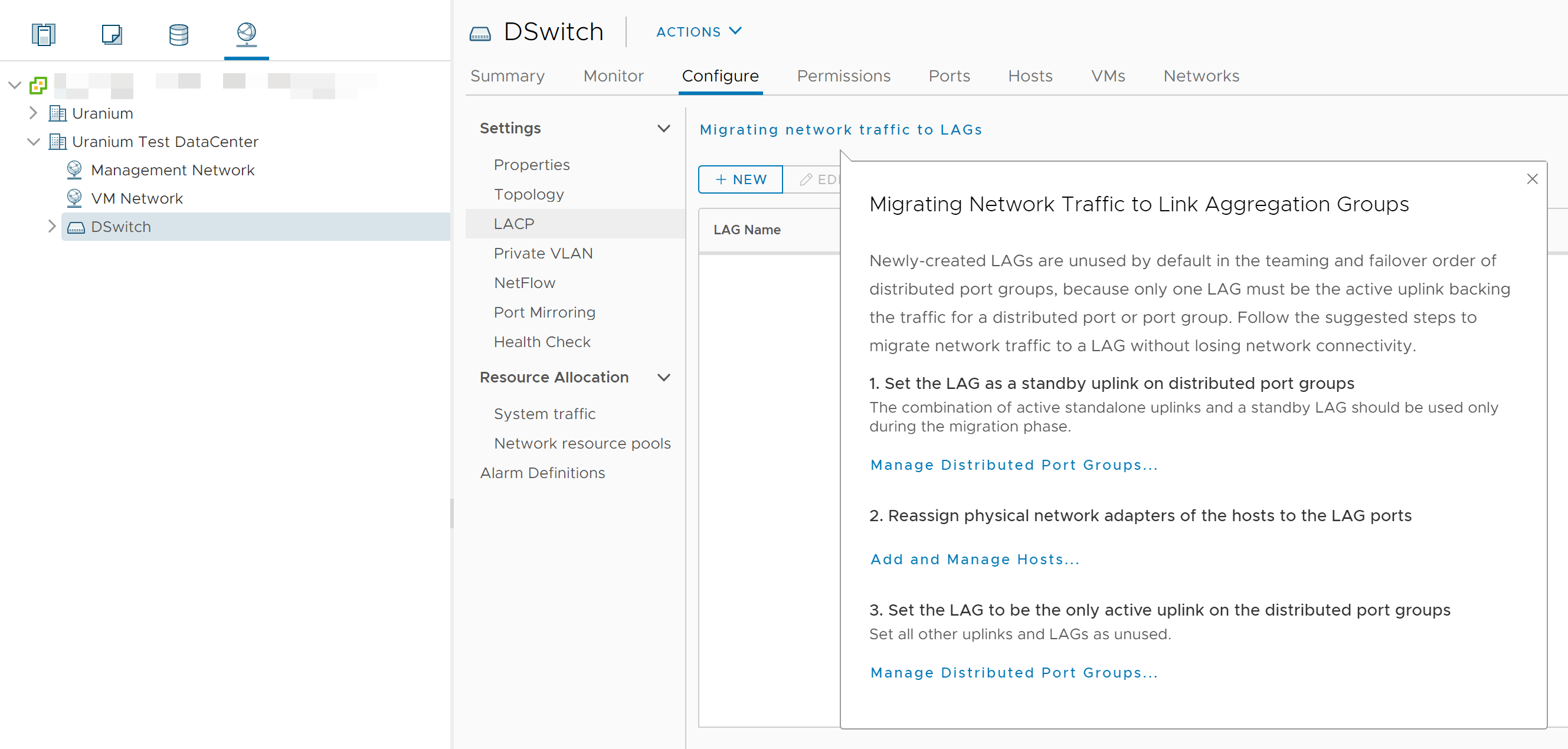

LACP/LAG Setup

Use the LACP setup located in the distributed switch configuration tab to migrate the uplinks to a LAG. Again, you need exactly the same numbers LACP ports on every of your hosts and you need to configure your switch to make it work.

Moving the vCenter to the Distributed Switch

vCenter are generally installed in the same cluster it manages, and if you have no spare uplink NICs for the regular vSwitch, you are left with the only option to move the vCenter to a distributed switch. Remember that if you try to configure the network so that the host disconnects with the vCenter, it will try to rollback? Now you have a chicken and egg problem. There is a solution to this problem but you need to do some precise planning here. First of all, we need to talk about the design of a distributed switch.

A distributed switch consists of multiple port groups, and port groups contain ports. Ports can be bind to a physical NIC (in uplink port groups), a VMKernel port or a VM virtual NIC; all the ports in the port group must only be bind to the same type of things. There are some configs (VLAN availability, promicsuous mode, MAC address changing, etc. ) that can be set up to the switch level and can be overrided in turn by a port group or a port.

There are 2 types of port groups:

- “Static binding”: the binding must be created by a vCenter (cannot be created in ESXi standalone), the performance is higher, you can save the port override config

- “Ephemeral”: the ports and the bindings are created on-the-fly so you can operate it in ESXi, the performance is somehow worse, and all the overrides are lost as long as you remove the binding (because the port is deleted as long as you remove the binding)

vCenter doesn’t really need much network performance (are you managing a cluster with a thousand hosts? If so, I hope you are not reading this basic tutorial), so I really recommend putting the vCenter’s virtual NIC into an ephemeral port group in case something went wrong and you need to log in to the ESXi host to recover vCenter’s network. The migration steps are simple:

- While initially creating the cluster, leave the host running vCenter outside but prepare enough resources in the cluster for the vCenter VM

- Disable lockdown mode on the hosts (if available)

- Create an ephemeral port group for vCenter only

- Wait for all the hosts in the cluster to sync the new network configuration (very important, just grab a cup of coffee and wait a minute)

- Shutdown the vCenter VM

- Log on to the hosts where vCenter is running, manually deregister the vCenter VM

- If you are using local storage on every host, manually move the vCenter VM files to a host already inside the cluster

- Log on to the destination hosts, register the vCenter VM (I moved it!), bind the VM’s NIC to the new port group, and power it on

- Wait a while while vCenter is booting (it’s really slow), and then verify the connectivity between vCenter and the hosts

- Add the host previously running vCenter to the cluster

I don’t recommend using ephemeral port groups for everything due to the performance impacts and scalability issues.

HA and DRS Setup

If you need HA and DRS (otherwise why do you need a cluster), it is a good time to set them up now. Use the configuration under “Services” section in the cluster configuration tab; do not use the quickstart wizard.

FAQ

Common Cluster Check Failures

Distributed Port Group on Distributed Switch X is missing.

You’ve chosen quickstart wizard managed networking and you happened to delete the vMotion port group. Now you are fucked up; either restore from last night’s backup, or tear down the cluster and start over.

VDS compliance check for hyperconverged cluster configuration

The help link will lead you to a very misleading and irrelevant KB article, but it really means either the VMKernel/uplink bindings are different on different hosts in the cluster, or you’ve deleted something that’s managed by the quickstart wizard.

If you use the regular vSwitch with VMKernel NICs, remember the port groups naming across hosts in the same cluster must be exactly the same (case sensitive).

Other Problems

Some of the VMs Disconnected from the Network After Migration

- Check if you have port config differences on the (physical) switch

- try assign the VM’s virtual NIC to another port group then assign them back

References:

Pingback: vSAN 7.0U1 Cluster Rebuild: A Firsthand Experience | Drown in Codes